Everyone’s Talking About Vibe Coding.

- Mar 9

- 13 min read

Updated: Mar 11

Nobody’s Talking About This.

Signal & Noise | Michael Vicenzino

I write about navigating careers, tech, and what’s next in the age of AI. If you’re new here, welcome.

Why It Matters:

The Shift: The most valuable skill in 2026 isn’t writing code — it’s describing what you want clearly enough that AI can build it for you.

The Proof: MIT named “generative coding” one of its 10 Breakthrough Technologies of 2026. AI now writes 30% of Microsoft’s code and over 25% of Google’s.

The Catch: The “just vibe it” takes are dangerously incomplete. The real skill behind AI-assisted building is something nobody’s talking about — and it’s something you can learn.

I want to tell you how AI-assisted building actually works. What it is. What it isn’t. And how you can start using it — even if you’ve never written a line of code in your life.

But before I do, I want to tell you about a moment that changed how I think about all of this.

It was early morning — probably 6am on a Tuesday. I was sitting at my desk describing, in plain English, how I wanted to create a family operating system to handle scheduling logistics, invoice management, daily real-time notes and alerts, and medication management for an elderly person’s caregivers, and messaging. Not writing code. Just explaining the problem like I was talking to a colleague. And within minutes, I was looking at a working feature on my screen.

I remember staring at it and thinking: I didn’t know how to do much of this six months ago. And I still don’t know how to code this. But it’s right there. And it works.

That’s the moment I realized something fundamental had shifted — not just for me, but for anyone willing to learn a new way of building.

There’s actually a name for what I was doing. And it started with one of the most respected people in AI.

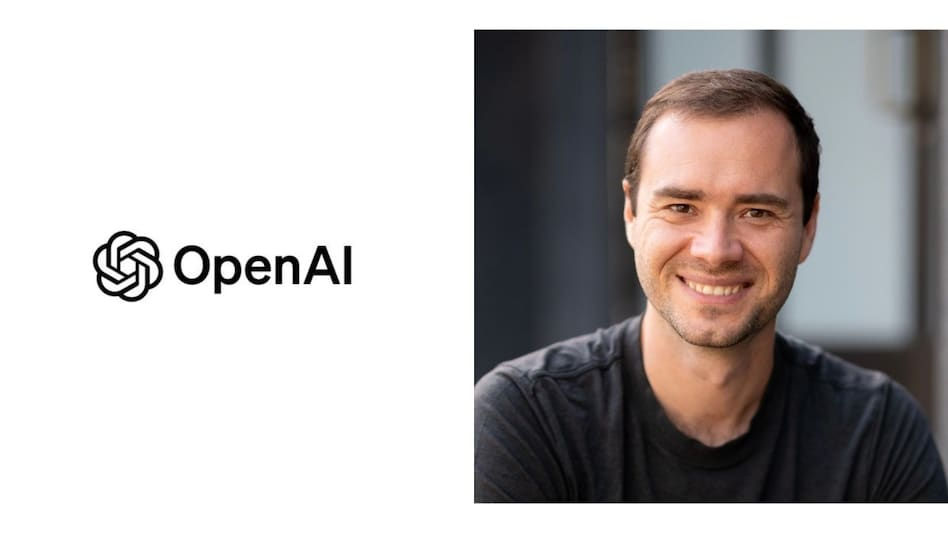

A year ago, Andrej Karpathy — co-founder of OpenAI, former head of AI at Tesla — posted something on X that stopped the tech world in its tracks.

He said he’d been building software in a completely new way. No syntax. No debugging in the traditional sense. Just describing what he wanted and letting AI handle the rest.

He called it “vibe coding.”

Surely, you’ve heard of it by now.

His exact words: “Fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

The post went viral. Millions of views. Debates erupted across every tech forum on the internet. Collins Dictionary named “vibe coding” their Word of the Year for 2025.

And then, in January 2026, MIT Technology Review made it official — they named generative coding one of the 10 Breakthrough Technologies of 2026.

This isn’t a trend anymore. It’s a shift.

Andrej Karpathy - Source: Business Today

And here’s the thing — the magic is real. I’ve seen it in my own work. To say it can make you 10x more productive is a gross understatement.

Over the past year, I’ve been building real, working applications using AI as my development partner. Not toy projects. Not demos. Fully functioning tools that solve problems I actually have — a personal command center that connects to my bank, email, tasks, travel plans, calendar, project planning, and AI Agents all in one place. A career platform that scores how well your resume matches a job posting. A family OS built for parents juggling kids and aging parents at the same time.

A year ago, each of these would have required a small - medium sized team and months of work. I built them as one person, mostly through conversation with AI tools.

And the speed still catches me off guard. Recently, I needed to add a new feature to one of these projects. What would have been a full day of work — maybe more — was done in under an hour. Not because I cut corners, but because the AI understood my projects’ design and architectural patterns and generated clean, consistent code that fit right in.

That velocity is the “vibe” part. You describe what you want, the AI understands the context, and working software materializes.

And it’s evolving fast. I’ve recently started experimenting with what the cool kids call agentic workflows — instead of working with one AI, you coordinate several AI agents that each handle a different part of the job.

One focuses on the code. Another reviews it for problems. Another handles the UX and functional QA testing. It’s like going from having one smart collaborator to having a small team of specialists, each doing what they’re best at.

The direction is clear — and it’s moving faster than most people realize.

But everyone’s so focused on the magic that they’re missing the other 80% of the work beyond the vibes.

Source: cursor.com

Let me explain what’s actually happening under the surface, because the name “vibe coding” makes it sound way more casual than it really is.

Here’s the basic idea. You open an AI tool — could be Claude Code, Cursor, Replit, Lovable, or a dozen others — and you describe what you want to build. In English. In your own words.

Not “make me a cool to-do app”

More like: "I'm building a family calendar for my family and for my mom's care team. Each family member gets their own color. Caregivers can see the schedule but can't change it. I need a medication tracker that logs what was given, when, and who gave it — and sends an alert if a dose gets missed. Everything needs to sync across

devices in real time."

See the difference? The second one isn’t code. It’s a clear description of a problem — who needs what, how it should behave, and what matters most. The more specific and structured your instructions, the better the AI performs. Vague in, vague out.

And this is a mindset shift most people haven’t made yet. We’ve been trained by Google (who’s currently killing it with embedded Gemini tools and their AI Studio) to think of AI the same way — type a quick question, get a simple answer.

But AI building tools aren’t search engines. You don’t ask them a question. You brief them like a working partner. You give context. You explain the goal. You describe what success looks like. The more you treat it like a collaboration and less like a search bar, the better the results get.

The AI takes that description, understands the intent, generates the code, and shows you a working version. You interact with it. You give feedback. “The sidebar is too wide.” “Add a button that lets me switch between week and month views.” “When a caregiver marks a medication as given, show a confirmation micro-animation and log the timestamp.” “If someone tries to submit the form without filling in the required fields, highlight what’s missing instead of just showing an error.” And it adjusts. Each round gets you closer.

Back and forth. Like a conversation.

That part is genuinely revolutionary. But here’s what nobody tells you.

The tech stack doesn’t care about vibes

When I first got one of my projects running, I hit a wall that no amount of prompting could bypass. A real-time connection between my app’s frontend and backend kept silently dying — no error message, no warning, it just stopped working. Imagine your Wi-Fi keeps dropping, but your router says everything’s fine. The problem isn’t the Wi-Fi — it’s something deeper in how your devices talk to each other. The actual fix took minutes. Finding it took hours.

Then my database started rejecting connections with a cryptic error. Turns out, there are two different ways a program can talk to a database — think of it like calling someone’s cell phone versus their landline. My app was dialing the landline, but the database was only picking up on the cell. AI couldn’t diagnose it because the error message was basically “call failed” without saying why.

None of this is “vibe” work. This is where the conversation with AI ends and your own problem-solving begins.

And it gets even trickier once your app leaves your laptop.

One of my projects worked perfectly on my machine — then broke the moment it went live in various devices (desktop browsers, mobile phone and iPad). Same code. Different environment. It’s like rehearsing a presentation on your laptop and everything looks great — then you plug into the projector at the client’s office and half the slides won’t load. The actual fix took two minutes.

Figuring out what to fix — that was the hard part.

I also ran a security audit — basically checking all the locks on the doors and windows. Found 23 gaps. AI agents helped me fix every one of them. But I had to know which doors and windows to check in the first place. That awareness is something you build through experience, not through prompts.

So what’s the actual skill here?

The hardest part of building with AI isn’t writing code. It’s making decisions.

My projects all have AI assistants built into them — you can ask questions in plain English. Early on, I noticed 80% of the questions were simple. “What’s the weather?” “Show me today’s events.” But the AI was treating every question like a research paper — taking 5-10 seconds to respond to something that should be instant.

So I designed a two-lane highway. Simple questions take the express lane. Complex questions like “compare my spending this month to last month” take the full route with deep analysis.

That’s not something you prompt an AI to figure out. That’s a design insight from watching real usage and thinking “this is too slow for what they’re asking.”

Same with project structure. I’ve landed on a repeatable pattern now — every new feature gets built the same way, in the same order - all with checks and confirmations along the way.

But the pattern itself came from building it wrong three or four times first. AI can follow a pattern brilliantly. Creating the right pattern — that's human work.

And here’s where it gets powerful. Once you’ve figured out a pattern that works, you can save it as a reusable set of instructions — in Claude Code, they’re called “Skills” — that the AI can apply to any project, not just the one you built it for. Need to create a document with a specific format every time? That’s a Skill. Need to build and test new features using the same layered approach? That’s a Skill. Need to generate a weekly report from a set of data? Skill.

You’re essentially teaching the AI how you work — and once it knows, it can repeat that process faster and more consistently than you could on your own.

Here’s the honest breakdown I wish someone had given me before I started:

AI can generate code from clear descriptions — but “build me a dashboard” and “build me a dashboard that shows these five metrics, updates every 30 seconds, and works on mobile” produce wildly different results. Specificity is everything.

AI follows whatever rules you’ve set up in your project — consistently and without complaint. But someone has to define those rules in the first place.

And AI can fix problems you can clearly describe. But the hardest bugs are the ones you can’t explain yet — the ones where something just feels wrong and you have to dig until you find the real cause.

“The vibe is the velocity. The skill is the direction”

Here’s something most people don’t realize: AI tools might remember your name or a few preferences between conversations, but they don’t remember your project. They don’t know the architecture decisions you made last week, the bug that took three hours to fix, or the pattern you want every new feature to follow. When it comes to complex building, every session essentially starts from scratch.

So I started writing things down — in a very specific way.

There’s a simple file format called .md — short for Markdown. Just plain text with light formatting. You could write one in any text editor or Google Docs. I keep a set of these files at the center of every project.

Think of them as giving your AI partner a brain and a memory.

The brain is a file I call CLAUDE.md. It holds everything the AI needs to know about the project: what we’re building, who it’s for, how the pieces connect, and what rules to follow. The AI reads it at the start of every session.

The memory is a separate file where I log every lesson and mistake. Every time something breaks, I write down why. Over time, this becomes a living document of hard-won knowledge — and because the AI reads it every session, it effectively learns from my experience.

Here’s a very simplified version of what these files look like:

# Memory — Kindora Family OS

## What This Project Is

A shared family calendar and AI coaching platform for

parents juggling childcare and eldercare.

## Rules

• Build one feature at a time. Test it before starting

the next one.

• Use clear, descriptive names for everything.

• Never change the database without documenting it first.

## Lessons Learned (The Hard Way)

• Always check that existing features still work after

making changes to the display.

• When updating multiple files at once, test the full

experience end-to-end before moving on.

## Current Status

• Calendar: in final testing

• AI Coach: working, integration in progress

• Care Team module: next up

Plain English. Organized with simple headers. No code required.

The result? The AI gets smarter about your project and your working style over time — not because it has built-in memory, but because you’re building that memory for it. Every session starts where the last one left off. Mistakes don’t repeat. Conventions stay consistent. The AI goes from being a generic tool to feeling like a partner who actually knows your work.

Every project I build now starts with these files before a single line of code gets written. The vision. The decisions. The rules. The memory.

They became the single most valuable files in every project I’ve touched.

Now — here’s where the conversation usually goes sideways.

Someone hears “you don’t need to code” and immediately thinks I’m saying engineers are being replaced. Or that anyone can ship a production-ready application by talking to a chatbot for an afternoon.

That’s not what I’m saying.

Professional software engineering — the kind that involves security at scale, team coordination, and long-term maintenance — still requires deep expertise. The MIT Technology Review article itself points out that AI-generated code can hallucinate, introduce vulnerabilities, and struggle with complex systems.

But here’s the thing most people miss: that’s the professional engineering bar. And most of you reading this aren’t trying to build the next Salesforce.

You’re trying to build a tool that solves a specific problem in your life or work. A dashboard. A scheduling system. A tracker. A prototype that’s been living in your head for two years. Maybe even something you can share with others as a side hustle — or the start of a new business venture.

For that? The barrier just dropped to nearly zero.

Think about what this means for your career.

The World Economic Forum keeps publishing the same finding: the skills that matter most going forward are creative thinking, analytical thinking, curiosity, and technological literacy.

Not technological mastery. Literacy. Agency. And ambition.

PwC found that workers with AI skills earn significantly more than their peers in the same roles. Not because they’re writing Python. Because they know how to leverage the tools.

The gap isn’t between coders and non-coders anymore. It’s between people learning to work with AI and people still pretending it’s someone else’s job.

Source: iStock - 1763326459

But here’s the part almost nobody in the “future of work” conversation is talking about.

Everything I just described — the AI fluency, the clear thinking, the ability to describe a problem and iterate toward a solution — those skills don’t just make you a better employee. They make it possible, for the first time, to build something of your own.

Think about that for a second.

Five years ago, if you had an idea for a product — a tool, an app, a service — you had three options. Learn to code (months or years), hire someone who could (thousands of dollars, minimum), or go chasing seed funding and VCs (yikes). Most ideas died right there. Not because they were bad ideas, but because the cost of building was too high for one person to absorb.

That wall is coming down. Fast.

I’m still figuring this out — I want to be upfront about that. I haven’t shipped a product to paying customers yet. But I’m planning to. Soon. A career platform, a family operating system, a personal command center — and quietly, the early stages of an AI consulting practice helping other businesses figure this stuff out too. All built as one person, mostly through conversation with AI tools. The total cost? My time and a few software subscriptions.

And when I do ship, I’m doing it publicly — right here on Signal & Noise. Sharing what works, what doesn’t, what breaks, and what I learn along the way. This newsletter isn’t just where I write about the AI era. It’s where I’m building through it, in real time, with all of you watching. Fail fast, adjust faster. That’s the only way to build something real.

A year ago, I wouldn’t have believed I could get this far. And that’s the point.

The same skills that help you land a better job — describing problems clearly, thinking in systems, working with AI tools — are the exact same skills that let you build a side project, test a small product idea, or start working toward something entirely your own. The path to self-employment used to require either deep technical skills or deep pockets. Now it requires clear thinking, a real problem worth solving, and the patience to learn as you go.

I’m not saying everyone needs to become a founder. I’m still working toward that myself. But I am saying that for the first time, the option feels real for people who never had it before. And in an economy where layoffs happen by spreadsheet and entire departments get restructured overnight, having the ability to build your own thing isn’t just exciting — it’s a safety net I wish I’d started building sooner.

So if you’ve got an idea — a tool you wish existed, a process you want to automate, a problem you’ve been solving with spreadsheets and sticky notes — here’s what I’d tell you.

Start small. One specific, annoying, real problem. Describe it out loud, like you’re explaining it to a smart colleague who’s never seen your workflow. Then open a tool — Claude, Cursor, Replit, Gemini, Antigravity, any of them — and have that conversation.

You’ll be surprised what comes back.

And when it breaks — because it will — don’t panic. Read the error message before you paste it back into the AI. Add screenshots. Give it your perspective and creative ideas for how to fix it. That instinct — curiosity before shortcuts — is the difference between someone who builds real things and someone who generates code that falls apart on day two.

The magic is real. But magic without understanding is just luck — and luck doesn’t debug production at 2am.

Cheng Xin/Getty Images

The question isn’t whether you should learn to code. The question is whether you’re willing to learn to think clearly, describe precisely, and build alongside a machine that can move at the speed of your imagination.

The barrier to the building just disappeared.

So, what are you going to build?

Next edition, I’ll walk you through something I’ve been building — something that started as a personal frustration and turned into a product I would love for any of you to try and give me critical feedback. Building in public so you can get a better product.

Stay tuned and thanks for reading.

Comments